The cost-of-change curve may be one of the most widely repeated “facts” in software development that is no longer true in the way most people think it is.

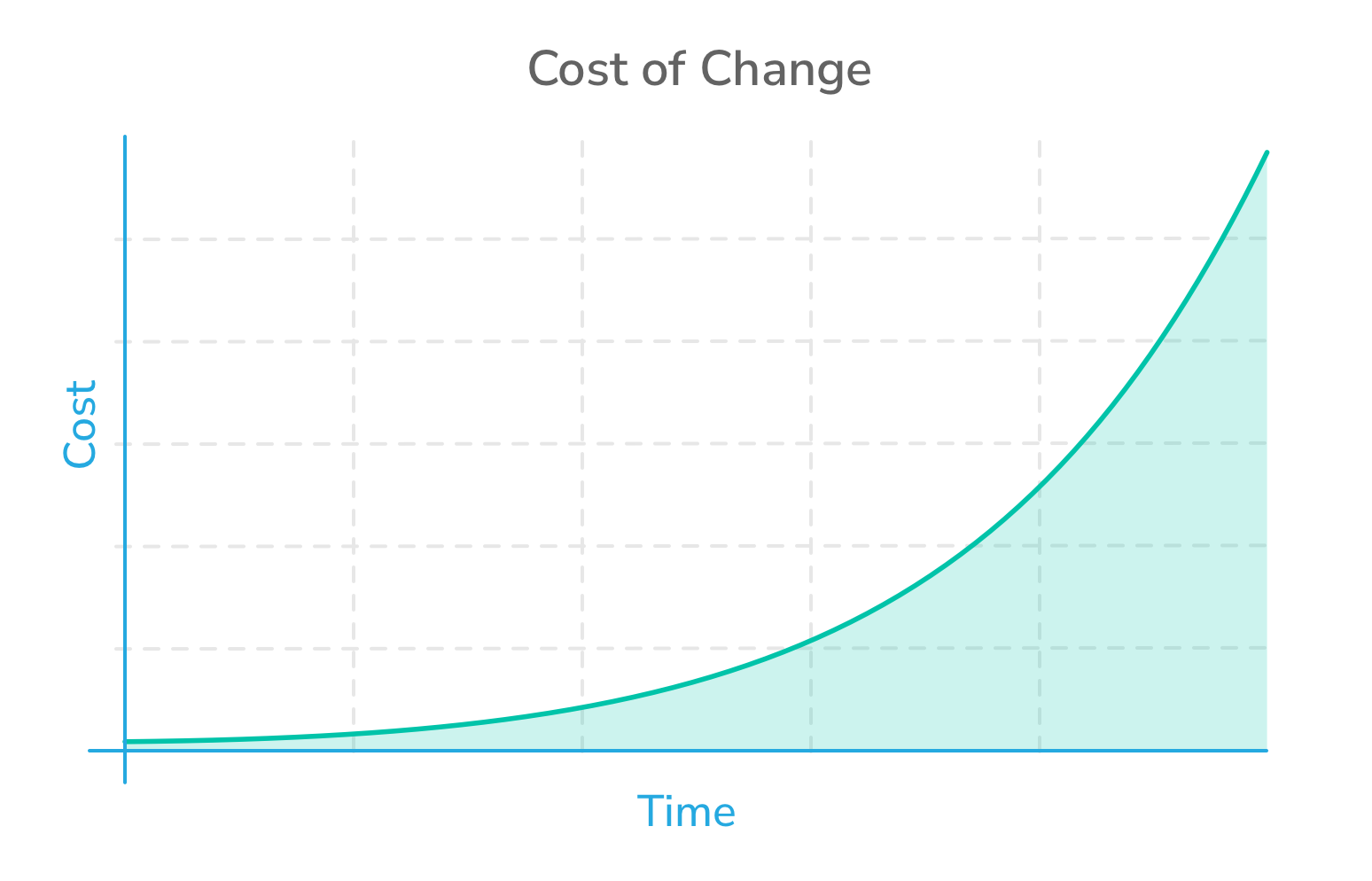

For decades, software people have warned that the later you make a change, the more expensive it becomes. Many of us have seen this expressed as a steep curve: changes are cheap early in a project, but become dramatically more expensive later—especially after release.

This can be seen in the nearby image, which was proposed by professor Barry Boehm based on his research.

This curve has been influential. It has shaped how teams plan, how managers manage, and how organizations justify heavy upfront analysis.

But while the cost-of-change curve was once a useful warning, it has become more like a widely repeated assumption: a true observation from an earlier era that we continue to repeat long after the world that produced it has changed.

The curve hasn’t disappeared, but it’s far flatter than most people assume.

When I say cost of change, I mean the time and effort required to modify working software after you’ve already started building it.

Boehm Was Right… for the World He Studied

Boehm’s work in the 1970s and 1980s helped define modern software engineering economics. His research was serious, credible, and grounded in the types of projects that were common at the time.

And in that environment, the curve made perfect sense.

Software was expensive to build. Tools were primitive. Integration was painful. Testing was often manual. Deployment was slow and risky. If you discovered a major requirement change late, you might be looking at months of redesign, recoding, and retesting.

In the 1970s and 1980s, late change really was disastrous.

The problem isn’t that Boehm was wrong. The problem is that we treated his findings as if they were timeless. Boehm gave us a snapshot. Over time, we turned it into a law of nature.

The Curve Has Been Flattening for a Long Time

Even before agile became mainstream, the cost of change was already falling.

Software development gradually became less fragile, less manual, and less dependent on heroics. Over time, the curve flattened. Changes still cost more later in development, but not nearly as much as they once did.

Three major forces drove this flattening.

First, IDEs and modern development environments made coding and debugging dramatically faster. Debugging shifted from hours of guesswork and print statements to minutes of inspection, breakpoints, and step-through execution.

Second, automated testing and continuous integration reduced the fear of breaking things. Teams could change code and know within minutes whether they had damaged something important.

Third, modular architectures changed how we build systems. We learned to assemble software from components, libraries, services, and reusable pieces. Changes stopped rippling through entire systems the way they once did.

These weren’t minor improvements. They fundamentally changed the economics of software development.

The Death of “Prototype vs Build”

If you started in software long enough ago, you may remember the era when prototyping was a big deal.

In the 1980s and into the 1990s, there was a strong push toward prototyping because building real systems was so expensive. Tools emerged that let teams create convincing UI simulations without actually building the underlying software.

I remember using a tool called Dan Bricklin’s Demo, which allowed you to prototype the entire UI of a system. Buttons worked. Forms could be filled in. Screens transitioned. To a user, it could look like a functioning application—but behind the scenes, it was carefully scripted. The “system” wasn’t real software at all, just a convincing illusion.

That kind of prototyping mattered because it was dramatically cheaper than building the real thing.

Today, that gap is shrinking fast.

With modern tools—and now with AI assistance—the cost of building a working version of software has been so dramatically reduced it’s no longer necessary to build extensive, working prototypes.

That shift alone should tell us something: we are no longer living in the same cost-of-change world that produced Boehm’s curve.

Agile Was Built on the Assumption That Change Was Affordable

When agile arrived, it didn’t magically make change cheap. Instead, agile recognized something that was already becoming true: change was no longer prohibitively expensive, and teams could finally take advantage of that reality.

Kent Beck captured this perfectly with the subtitle of Extreme Programming Explained: “Embrace Change.”

That subtitle wasn’t a motivational slogan. It was an economic argument.

Beck was essentially saying: if we can keep the cost of change low enough, we don’t have to fear change—we can welcome it.

Agile worked not because it reduced the cost of change to zero, but because it assumed the cost of change could be kept low enough to tolerate continuous learning.

Agile practices flattened the curve even further. Short iterations meant teams got feedback sooner. Customer collaboration reduced the risk of building the wrong thing for months. Backlogs made it easier to change direction without redrawing a project-long Gantt chart. Refactoring encouraged teams to keep designs flexible rather than brittle.

Agile didn’t eliminate the cost of change. But it reduced it by shortening feedback cycles and encouraging adaptability.

AI Is Flattening the Curve Again

Now we’re entering another major shift.

AI-assisted development tools are reducing the cost of change in a way that may be even more dramatic than what we saw with agile.

Why?

Because AI reduces the amount of human time required to write and revise code.

It’s a simple point, but the implications are enormous.

When coding becomes faster, experimentation becomes cheaper. Teams can try an idea, see if it works, and revise it quickly. They can explore alternatives without paying the traditional penalty of “wasted development effort.”

AI is making code feel less like construction and more like revision.

As AI makes it easier to modify software, something interesting happens: the bottleneck shifts.

The cost of change becomes less about writing code and more about waiting for feedback.

In other words, the primary cost of change increasingly becomes feedback delay, not development effort.

What Hasn’t Gotten Cheaper

If all this sounds like good news, it is. But it doesn’t mean software development has become effortless.

Understanding users is still hard. Product discovery is still hard. Organizational decision-making is still hard. AI can help us analyze data or prepare for interviews, but it hasn’t eliminated the need to deeply understand what users need and why.

The hard part hasn’t disappeared—it has moved.

For decades, our premise has been that coding was the hard part. That is no longer true. Today, teams may spend more time understanding user needs and getting feedback.

But once we have feedback, acting on it is cheaper than ever.

That is the key change in software economics.

Requirements Don’t Need to Be Perfect—They Need to Be Revisable

One of the classic lessons people take from Boehm’s curve is that we must lock requirements early. If late change is expensive, the logical response is to prevent it.

But it has a hidden cost.

The earlier you lock down requirements, the more likely you are to build the wrong thing. Because early in a project, we don’t yet know what users truly need. We don’t know what they’ll respond to. We don’t know which assumptions are wrong.

Locking requirements early to avoid late change is an admirable goal, but it has to be weighed against the value of early discovery. The sooner we can iterate over functionality with users, the sooner we can eliminate misunderstandings and misinterpretations—before they become expensive.

What This Looks Like in Practice

Not long ago, a late requirement change might mean weeks of work: update the UI, modify backend logic, change database structures, update test scripts, coordinate integration, and hope nothing broke in production.

Today, with modern tools and AI assistance, a developer can often implement that same kind of change in hours or days—especially if the system is well-tested and built with modular components. The work still matters, but it no longer has to be a crisis.

The economics are simply different now.

So What Should Managers and Teams Do Differently?

This matters because many organizations still run approval processes designed for an era when code changes were slow and risky.

In many organizations, the slowest part of delivery isn’t coding. It’s waiting for permission.

If the cost of change is falling, then the biggest risk isn’t changing too late.

The biggest risk is waiting too long to learn.

This is where the management mindset must evolve.

We should still do discovery. We should still think carefully. We should still try to understand users. But we no longer need to treat a specification document as something that must be perfected before coding begins.

Instead, we can treat the specification as something that evolves alongside the product.

We can iterate on requirements the way we iterate on code.

And we can do that because the cost of making changes has dropped.

The Cost-of-Change Curve Isn’t Flat—But It’s Dramatically Flatter

None of this means that change is free.

If we misunderstand our users badly enough, we may still have to iterate ten times instead of two. That’s real cost. There are still real consequences to getting things wrong.

But the curve is far flatter than it was even a few years ago.

It has been flattening for decades. Agile accelerated that trend. And AI is accelerating it again.

If You Still Fear Change, You’re Managing Like It’s 2005

Agile teams have been saying “embrace change” for years.

AI makes that idea more practical than ever.

If you still manage software projects as if late change is catastrophic, you may be using a mental model that was valid decades ago—but no longer reflects today’s reality.

The cost of change hasn’t disappeared.

But it has come down so much that we can stop treating change as failure.

Instead, we can treat change as learning.

Modern software development rewards adaptability more than accuracy.

Stop trying to perfect requirements. Instead, perfect your feedback loop.

Last update:

March 3rd, 2026