Key Takeaways

- To successfully advance Agentic AI initiatives, you need Agentic‑Ready Data: the highest-quality data that is integrated, governed, and enriched for AI, automation, and analytics initiatives across the enterprise.

- The latest Precisely Data Integrity Suite updates help teams build trusted pipelines, share reusable data products, and give AI direct access to APIs through a Precisely-hosted MCP server.

- By preparing data specifically for Agentic AI, organizations can move beyond experimentation and scale AI applications with confidence.

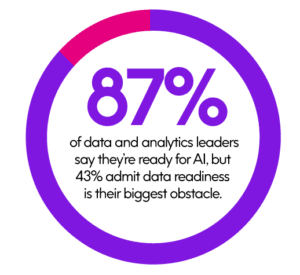

AI adoption and investment continue to rise. But for most, there’s still a widening gap between what AI could do and what it’s actually doing – and the root cause isn’t the models. It’s the data.

Enterprise data today is fragmented across systems, riddled with inconsistencies, and difficult to access programmatically. Even when teams manage to get data into a usable state, it’s rarely reusable – rebuilt from scratch for each new project, shared informally, and governed inconsistently.

And when it’s finally time to connect that data to an AI application or agent-driven workflow, developers and builders face a maze of custom integrations, manual configurations, and access challenges that slow everything down.

The latest advancements in the Precisely Data Integrity Suite designed to solve these challenges – not by adding another layer of tooling, but by addressing the fundamental data-readiness problem at the center of every stalled AI initiative.

New Capabilities to Unlock Agentic-Ready Data

The latest Data Integrity Suite enhancements introduce capabilities that help organizations drive Agentic-Ready Data, enabling them to build, share, and use AI at scale. Here’s a snapshot of what that means for organizations like yours:

- Data Integration Agent: Reduce manual setup and accelerate time to value with AI-assisted data replication pipeline design.

- Data Product Marketplace: Publish and share trusted, reusable data products across teams and partners in a governed environment – delivered through our partners, Huwise.

- New APIs for Data Integration, Data Quality, and Data Catalog: Enable automation and integration into AI-driven workflows with full programmatic control over pipelines, quality rules, and metadata.

- Precisely-hosted Model Context Protocol (MCP) Server: Easy, secure access to the Suite APIs for AI agents without the need for custom integrations

How to Achieve Agentic-Ready Data in the Data Integrity Suite

There’s no shortage of powerful AI models, platforms, and frameworks available today. What’s in short supply is data that’s ready for those systems to use – data that is accurate, consistent, and contextualized.

This is what we call Agentic-Ready Data: the highest quality data that is integrated, governed, and enriched so that AI agents, automation systems, and analytics platforms can operate on it with confidence.

Without it, AI outputs are unreliable, decisions are risky, and scaling beyond a handful of pilot use cases becomes nearly impossible.

The latest updates to the Suite create a more direct and governed path to Agentic-Ready Data, allowing organizations to:

- Build trusted data pipelines

- Share that data as governed, reusable products

- Make APIs directly usable by AI systems and applications

Let’s break that down further with a closer look at the enhancements.

Build: Accelerate Data Pipelines with an AI-Powered Integration Agent

Building reliable data pipelines can be one of the most time-consuming steps in preparing data for agentic AI and enterprise analytics initiatives.

Teams spend considerable time on manual setup, schema mapping, validation, and troubleshooting, often relying on a small number of specialists who carry deep institutional knowledge.

The new Data Integration Agent in the Precisely Data Integrity Suite changes that equation. Your teams can now leverage AI-assisted guidance to design and configure data replication pipelines without the significant manual effort previously required.

By reducing the repetitive, error-prone work that slows teams down, you can focus on higher-value decisions and get data flowing faster. The Data Integration Agent joins the previously announced Gio AI Assistant and a growing collection of specialized agents for data quality, location intelligence, and enrichment.

AI Assistant and a growing collection of specialized agents for data quality, location intelligence, and enrichment.

Together, that forms a coordinated, governed approach to AI-assisted data management.

If you’re onboarding new data sources, migrating to the cloud, or scaling integration across business units, this means dramatically shorter time-to-value and greater consistency across pipelines.

Share: Turn Trusted Data into Reusable Products

Once data is trusted, the next challenge is making it accessible, not just to one team or one project, but across the organization and beyond.

Today, most enterprises rebuild data for each use case. The same customer dataset gets cleaned, transformed, and validated separately by marketing, risk, operations, and analytics. It wastes time, introduces inconsistencies, and creates governance blind spots.

The Data Product Marketplace, delivered through our partnership with Huwise, brings a fundamentally different approach. Now, you can publish and share trusted data products with built-in validation, governance, and continuous monitoring, so teams can discover and reuse high-integrity data across your business, collaborate with external partners, and power analytics and AI initiatives without rework.

This is a critical shift. Instead of treating data as a byproduct of individual projects, organizations can manage it as a strategic, reusable asset that compounds in value every time it’s shared, enriched, and applied to a new use case.

Use: Give AI Agents Direct, Governed Access to APIs Through a Precisely-Hosted MCP Server

Even with trusted, shareable data, there’s still a last-mile challenge: getting that data for Agentic AI into critical applications and workflows without building custom integrations for every connection point.

New APIs for Data Integration, Data Quality, and Data Catalog provide full programmatic control over pipelines, quality rules, and metadata. These APIs enable you to automate workflows, monitor data health, and integrate data management directly into AI-driven processes.

Building on this, the new Precisely-hosted Model Context Protocol (MCP) server extends these Suite APIs, enabling AI agents and tools to securely discover, access, and use them without custom integration.

If APIs were designed for developers, MCP is designed for AI. It provides a standard way for AI systems to find and interact with data services, removing the friction that has traditionally made scaling AI applications so difficult. This follows the previously released MCP server, focused on location intelligence and data APIs, which is now expanding to cover the full breadth of the Data Integrity Suite.

For developers and builders, this means less time spent on plumbing and more time creating applications that deliver business value.

Build Location Reports in Seconds: Claude Desktop Meets Precisely

Scale AI with Agentic-Ready Data

As Agentic AI adoption grows, success depends entirely on the quality, governance, and accessibility of the data behind it.

Organizations that invest in Agentic-Ready Data – data purpose-built for Agentic AI – are far better positioned to move beyond pilots and into production. Those that don’t often remain stuck rebuilding data, managing brittle integrations, and limiting AI to low-impact use cases.

The latest enhancements to the Precisely Data Integrity Suite help teams move faster by making trusted data easier to build, easier to share, and easier for AI systems to use – without custom work or added complexity.

The result is a data foundation designed for AI systems that are expected to operate, adapt, and scale with confidence.

Explore the latest advancements in the Data Integrity Suite

The post What the Latest Advances in Agentic-Ready Data Mean for Scalable AI appeared first on Precisely.