These days, it’s difficult to find a business journal, quarterly earnings call, industry white paper, or strategy presentation on business transformation that isn’t centered on Artificial Intelligence (AI). Modern AI represents a fundamental shift in how organizations approach content consumption, interpretation, and generation, enabling businesses to augment and automate a wide range of tasks previously requiring deep expertise and years of specialized knowledge.

But for all the attention garnered by AI’s ability to understand and produce unstructured content, i.e., texts, images, audio, etc., many, many core business processes have long relied on classical Machine Learning (ML), a different though related technology, producing predictive labels from structured data inputs (Figure 1). Thus far, the transformative power of AI has left classical ML largely unchanged.

The persistence of traditional ML workflows stems from their inherent complexity and labor intensity. Data scientists routinely spend upwards of 80% of their time on activities that occur before model training even begins: preparing and validating structured data inputs, engineering features, and selecting the right model class. Moreover, as underlying data distributions shift and model performance degrades over time, this work is not a one-time investment but an ongoing cycle of monitoring, debugging, and retraining.

At scale, this challenge intensifies. Organizations deploying hundreds, if not thousands of ML models rely on automated experimentation frameworks to evaluate thousands of parameter combinations. But even automation cannot overcome fundamental resource constraints.

The reality is stark: companies must choose which models receive optimization attention and which run “good enough” given limited resources and the need to turn around business results promptly. But the emergence of new AI models focused on structured data inputs and predictive outputs may finally offer a path forward.

Video 1. Interacting with the TabPFN model as part of the Databricks solution accelerator

Introducing TabPFN, an AI Model for Machine Learning

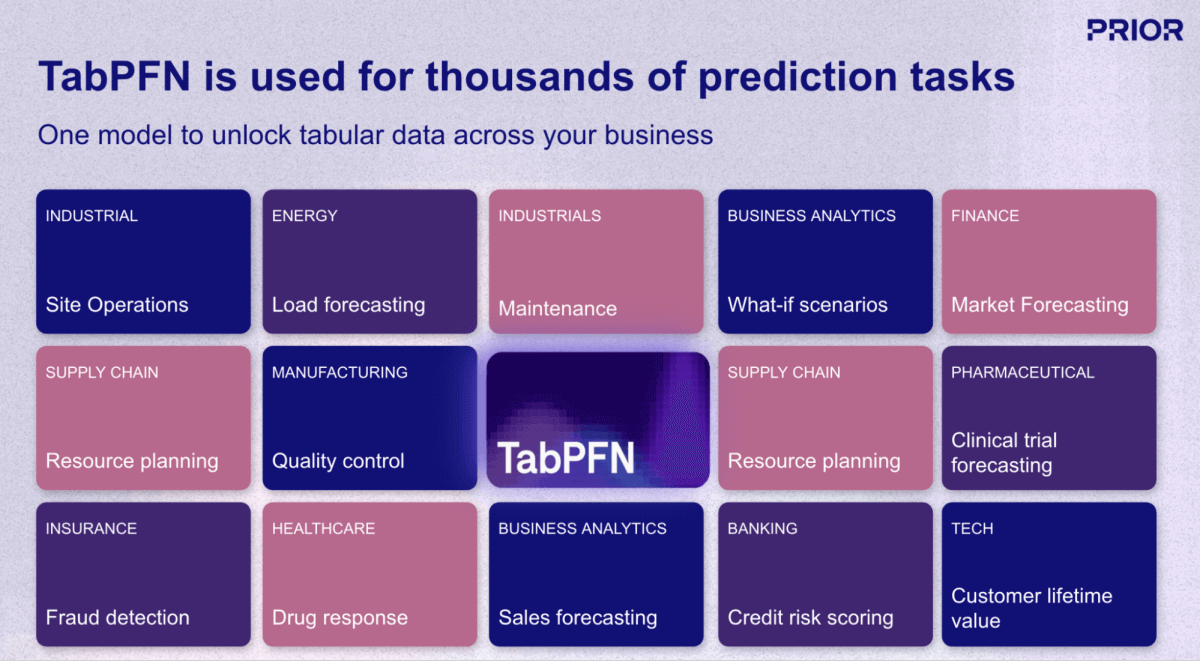

One of the most promising developments in this space is TabPFN, a foundation (AI) model from Prior Labs that fundamentally reimagines the machine learning (ML) workflow for structured data. Unlike traditional ML approaches that require building and training a unique model for each prediction task, TabPFN applies the same “pre-trained, ready-to-use” paradigm from LLMs to tabular business data. The model was pre-trained on over 130 million synthetic datasets, effectively “learning how to learn” from structured data across virtually any domain or use case (Figure 1).

Collapsing the ML Timeline

The implications for ML productivity are dramatic. Where traditional approaches require data scientists to invest hours or days in data preparation, feature engineering, model selection, and hyperparameter tuning, TabPFN delivers production-grade predictions in a single forward pass, typically measured in seconds.

The model handles raw inputs directly, automatically managing missing values, mixed data types, categorical and text features, and outliers without requiring the extensive preprocessing that typically consumes the majority of data science effort. Perhaps most significantly, TabPFN eliminates the ongoing maintenance burden of model retraining: as new data becomes available, organizations simply update the model’s context rather than initiating a new training cycle.

Performance Without the Trade-Offs

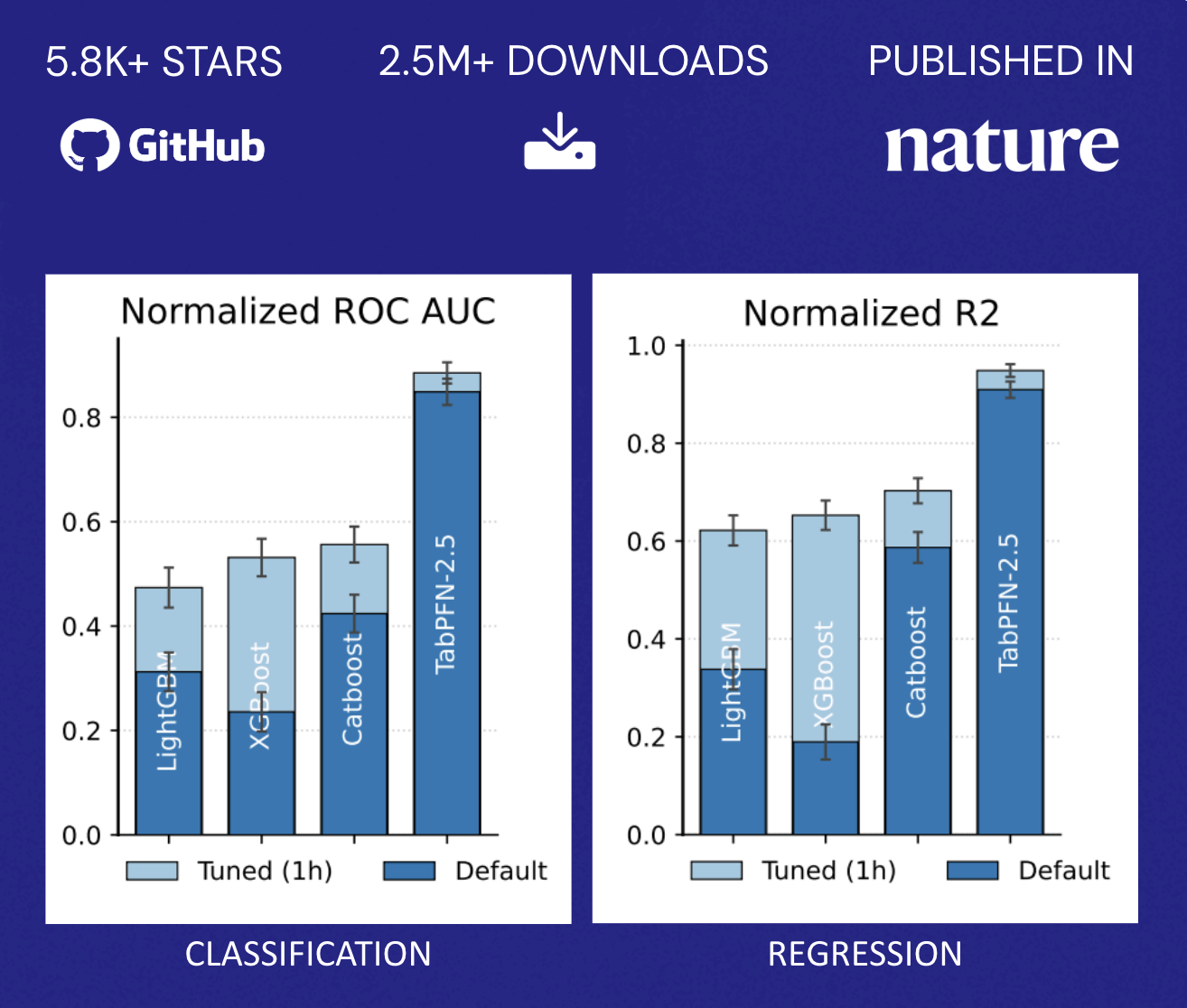

TabPFN exceeds the accuracy of traditional methods that require hours of automated tuning. This performance profile fundamentally alters the economics described earlier: organizations no longer face a binary choice between model accuracy and resource allocation. Instead, they can rapidly deploy predictive capabilities across a broader range of use cases without proportionally scaling their data science teams, democratizing ML beyond the handful of highest-value applications that typically justify dedicated optimization efforts (Figure 2).

Scaling AI’s Impact to Structured Prediction

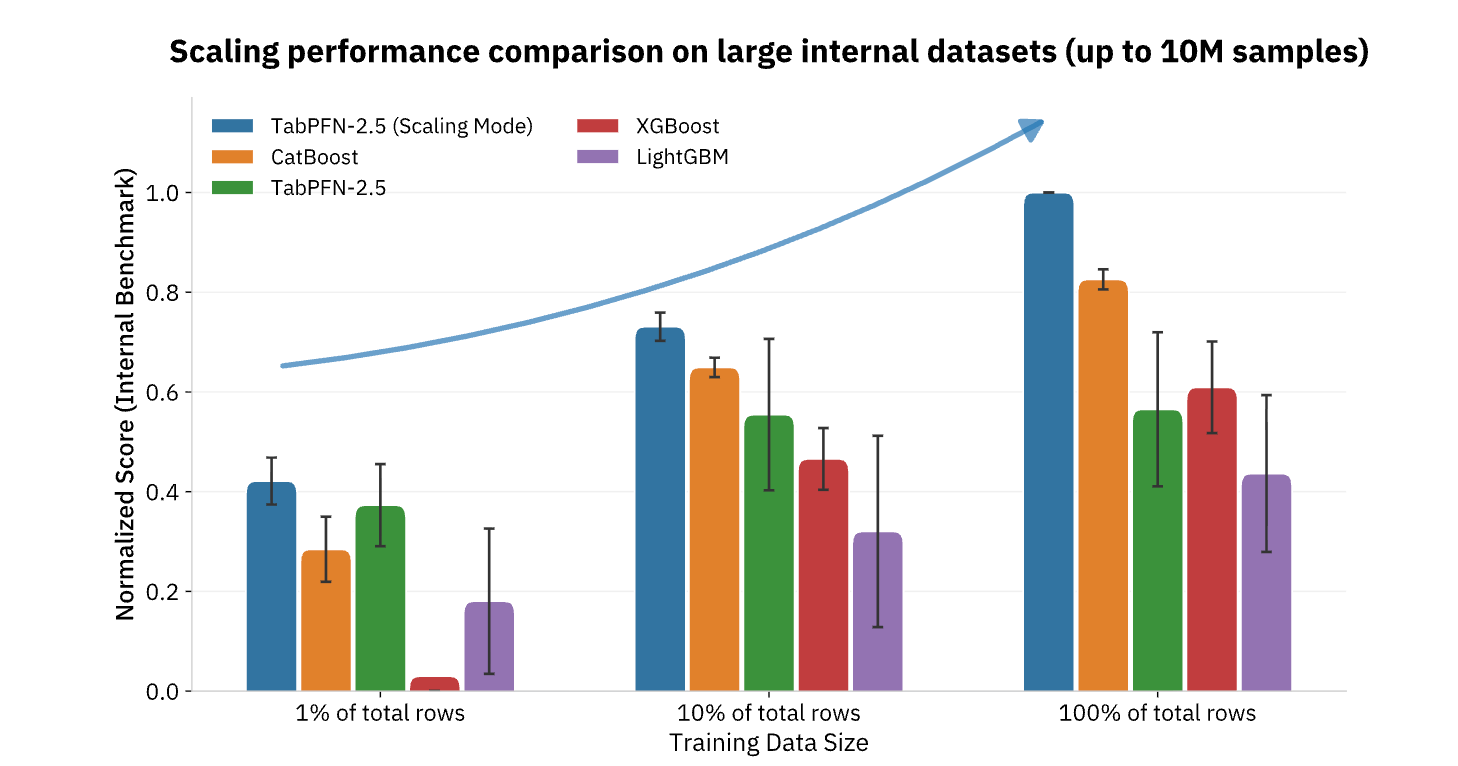

TabPFN currently supports datasets up to 100,000 rows and 2,000 features, with enterprise versions extending to 10 million rows, covering the vast majority of operational ML use cases across retail, finance, healthcare, manufacturing, and other industries. For organizations seeking to operationalize AI beyond content generation and natural language tasks, foundation models like TabPFN represent the missing piece, bringing the same step-function productivity improvements to the structured data and predictive analytics that have long formed the backbone of data-driven decision-making (Figure 3).

TabPFN is already powering many real-world applications for companies around the globe. Deployments in various domains, from financial risk management with Taktile, to health outcome evaluation with NHS, and predictive maintenance with Hitachi, have seen a boost – both in efficiency and in quality of the outcomes. TabPFN consistently outperforms traditional ML methods, improving the baseline by 10%-65% and speeding up data science workflows by 90%. Organizations are unlocking increased revenue, better health outcomes, maintenance cost savings, churn prevention, and much more.

Using TabPFN with Databricks

Databricks has long been the preferred platform for data scientists seeking to build predictive capabilities with Machine Learning (ML). As an open platform, TabPFN is well-suited for use within the Databricks Platform.

Build Where the Data Lives

Most enterprise classical ML starts from Lakehouse data: transactions, operational telemetry, customer events, inventory signals, and risk indicators. Moving that data into external environments slows teams down by creating duplication, increasing security risk, and weakening reproducibility and auditability. Databricks enables TabPFN workflows directly alongside governed data, so teams can minimize data movement while maintaining controls. With Unity Catalog, organizations centralize access control and auditing and preserve lineage across data and AI assets, which matters when you need to prove what data was used, how features were derived, and who had access at decision time.

Efficiently Operationalize Results

TabPFN is a modeling approach. To create production impact, it must integrate with repeatable enterprise patterns such as batch and real-time scoring, evaluation, governance, and monitoring. Databricks is a strong platform for these workflows, with scalable compute and real-time inference infrastructure that can turn TabPFN into a reliable operational process. For evaluation and monitoring, MLflow provides experiment tracking and a model registry to manage versions, lineage, and promotion workflows in an auditable way.

Provide Ongoing Model Governance

Databricks provides continuous monitoring of TabPFN model performance, detecting when predictions begin to drift from actual business outcomes. When adjustments are needed, TabPFN’s architecture eliminates the traditional weeks-long retraining cycle: teams simply update the model’s context with recent data and redeploy within minutes rather than days. This combination of automated monitoring and rapid refresh capability ensures prediction quality remains aligned with changing market conditions while dramatically reducing the data science resources typically required for ongoing model maintenance.

To help teams test TabPFN with minimal setup, we published a publicly available solution accelerator that shows how to run TabPFN end-to-end on Databricks with governed Lakehouse data. The accelerator includes a series of notebooks that realistically simulate data from a variety of industry scenarios and build predictions using TabPFN (Video 1).

Get started today, bringing the transformative power of AI to your ML workloads and driving across-the-board business process transformation.