This blog is jointly written by Md Rahman, Arkaprabho Ghosh, Navin Bilwar, and Desh Shukla.

Executive summary

Cisco IT recently evaluated fine-tuning embedding models using NVIDIA Nemotron RAG fine-tuning recipe as part of an effort to improve retrieval accuracy for domain-specific enterprise data. The objective was not to redesign existing retrieval-augmented generation (RAG) systems, but to understand whether targeted embedding fine-tuning could materially improve semantic search quality with reasonable effort and fast turnaround. Through this experiment, Cisco was able to validate firsthand that embedding fine-tuning, combined with synthetic data generation, can deliver measurable accuracy gains within a short time frame. The experiment also demonstrated strong time-to-value, enabling rapid iteration and clear performance signals without long training cycles or extensive manual labeling. The reduced turnaround of only a few days to understand the immediate benefits was a key outcome of this collaboration.

The embedding model training and evaluation workflow was executed on Cisco AI PODs running Cisco UCS 885A infrastructure powered by NVIDIA HGX platform.

Problem statement

Prior to conducting this experiment, Cisco had conducted similar embedding fine-tuning experiments using earlier generation models and smaller scale infrastructure. These prior efforts required significant manual tuning of hyperparameters such as batch size and number of epochs, and results were often difficult to stabilize. Iteration cycles were long, making it challenging to explore different configurations or scale experiments. Despite some localized improvements, keyword search remained necessary for many domain-specific retrieval scenarios. There was also no standardized, end-to-end workflow that engineering teams could execute quickly and evaluate consistently across runs. Often, these efforts would take weeks to months of manual effort for uncertain gains.

How the fine‑tuning went and time to value

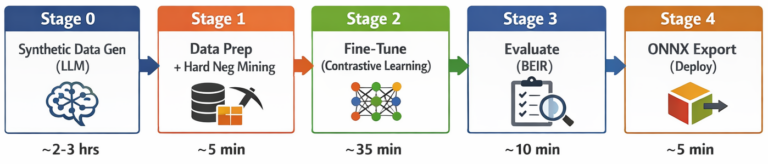

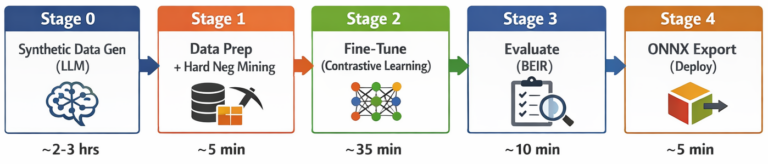

In this experiment, Cisco used the NVIDIA NeMo Retriever embedding finetuning recipe, leveraging synthetic data generation to produce training signals from existing corpora. The recipe runs through five distinct stages: synthetic data generation (SDG), data preparation with hard-negative mining, contrastive fine-tuning, BEIR evaluation, and ONNX model export. The workflow was able to run end-to-end successfully. All experiments ran on a single NVIDIA H200 143 GB GPU hosted within Cisco AI Pods built on Cisco UCS 885A systems. Finetuning runs completed within hours of training time, enabling rapid experimentation across multiple dataset sizes and configurations. The use of synthetic data generation eliminated the need for manual labeling, significantly reducing overhead. This approach allowed Cisco to iterate quickly, observe performance trends early, and validate whether embedding fine-tuning was worth further investment. The overall time-to-value was substantially shorter than previous efforts, with meaningful insights gained after only a small number of runs.

The five-stage pipeline architecture:

Timings based on ~925 documents / ~9,200 QA pairs / ~7,800 training examples on a single NVIDIA H200 GPU running on Cisco AI Pods with Cisco UCS 885A infrastructure. Actual duration scales with data volume.

Timings based on ~925 documents / ~9,200 QA pairs / ~7,800 training examples on a single NVIDIA H200 GPU running on Cisco AI Pods with Cisco UCS 885A infrastructure. Actual duration scales with data volume.

Accuracy gains observed

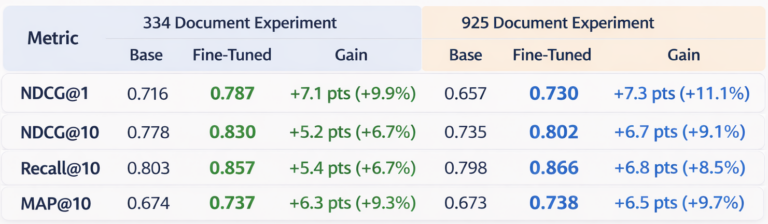

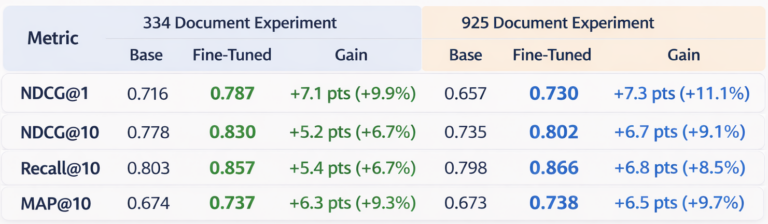

Across multiple experiments, the results showed consistent, measurable improvements. Fine-tuning the NVIDIA 1-billion-parameter NV-EmbedQA model on synthetic domain-specific data yielded gains across all retrieval metrics, with NDCG@1 gains of +7.1 to +7.3 absolute points (+9.9% to +11.1% relative). Recall@10 improved by up to +6.8 points (+8.5%), and MAP@10 by up to +6.5 points (+9.7%). Using an on-premise 120B-parameter LLM for synthetic data generation, the entire pipeline ran with zero external API costs and with the data staying completely on prem ensured data privacy. These gains held even as dataset size increased and retrieval tasks became more challenging. Importantly, improvements were observed on domain-specific queries that previously performed poorly with base embedding models. While these results represent an initial baseline rather than a fully optimized outcome, they provided strong confirmation that embedding fine-tuning can materially improve retrieval quality for enterprise-specific data.

Summary of experiments

Table 1. Retrieval performance comparison between the base embedding model and the contrastively fine-tuned model across two dataset sizes (334 and 925 documents). Fine-tuning consistently improves ranking quality across all BEIR evaluation metrics.

Table 1. Retrieval performance comparison between the base embedding model and the contrastively fine-tuned model across two dataset sizes (334 and 925 documents). Fine-tuning consistently improves ranking quality across all BEIR evaluation metrics.

Key Observations:

- Fine-tuning consistently improved retrieval quality across all metrics.

- NDCG@1 showed the largest improvement in top-level relevance.

- Gains were stable across the two dataset sizes (334 and 925 documents).

- Improved Recall@10 and Map@10 gains indicative of better coverage and ranking than the base embedding model.

What surprised us

The most unexpected finding was how quickly the recipe delivered actionable results. Within a few days of starting the experiment, we had measurable accuracy improvements — a stark contrast to previous efforts that took weeks to months. The synthetic data generation approach produced training signals of sufficient quality to drive meaningful gains without a single manually labeled example. We were also surprised by how well the improvements generalized across query types, including the rare-token identifier queries that had historically been the weakest point for semantic search.

Next steps with engagement

Building on these results, Cisco will continue working with NVIDIA to systematically push accuracy further. The next phase of work will focus on:

- Using a fixed evaluation set across runs so that metrics will be directly comparable

- Tuning the learning rate (trying default, half, and double) and increasing epochs from 3 to 5

- Scaling training data to ~100K QA pairs to find the saturation point for the domain

- Using a larger or higher-quality LLM for synthetic data generation to improve QA pair fidelity

- Applying 10% warmup with cosine decay for more stable convergence

- Increasing hard-negative mining from 5 to 10 negatives per query for a stronger contrastive signal

- Refining synthetic data generation prompts to better emphasize rare and domain-specific terms — bug IDs, product identifiers, firmware versions — where base models struggle most

- Exploring chunk-aware training: using real document chunks from a production vector database as the retrieval corpus, generating questions against those chunks via the LLM, and mapping each question to its positive chunk and hard-negative chunks — training the model on the same data distribution it will encounter in production, where answers may be buried in longer text and chunking strategies will vary

Longer term, the engagement will expand to include re-ranker fine-tuning and broader retrieval optimization as part of a full end-to-end RAG improvement effort.

Value of the fine-tuning embedding model

This experiment supports that leveraging a fine-tuning embedding model can accelerate time to production by providing a validated, end-to-end fine-tuning workflow that delivers measurable improvements in days rather than months. The ideas and findings from this work are actively shaping the recipe’s evolution, while Cisco gains early access to a maturing pipeline that shortens the path from experimentation to production. The work also demonstrates how Cisco AI Pods based on Cisco UCS 885A systems and NVIDIA H200 GPUs can provide an effective enterprise infrastructure foundation for rapid embedding model adaptation.

Key fine-tuning embedding model benefits for businesses

- Protect proprietary data (on-premises execution)

- Reduce support costs (faster resolution, fewer escalations)

- No cloud API dependency (zero external costs)

- Fast time-to-value (full end-to-end pipeline — all 5 stages including SDG, mining, training, evaluation, and export — completes in 2-5 hours on a single GPU)

Key fine-tuning embedding model benefits for developers

- No manual annotation required (synthetic data generation)

- Modular, hackable architecture (5 distinct stages: SDG → Data Prep → Fine-Tune → Evaluate → Export)

- Production-ready outputs (ONNX export)

- Built-in evaluation (BEIR — Benchmarking Information Retrieval — framework)

- Hard negative mining included (automatic quality boost)

Get started

The fine-tuning recipe for Llama Nemotron Embed 1B model is available now as a complete, production-ready pipeline. Whether you’re building enterprise search, RAG applications, or domain-specific retrieval systems, this recipe provides a clear path from raw documents to deployed, domain-adapted embeddings.

Ready to fine-tune your own embedding model?

👉 Explore the Nemotron Embed Fine-Tuning Recipe on GitHub